Introduction

With Pacu and our AWS Pentesting simulating attacks on cloud architecture, we often get questions about how keys get lost (or even statements that such an event is unlikely). To address these concerns we’ve written a blog post to walk through the many ways this could happen.

1. Git Repository Misconfigurations

Although it breaks best practice rules, AWS keys are often shared between developers and engineers in companies, which means that keys are hosted for use in a shared location on an internal network, such as a private Git repository. This could allow rogue users on the internal network access to AWS where logging could not necessarily pinpoint the source of the compromise.

Another problem can sometimes come in with .gitignore files, where developers are expected to house a local environment variables file for secrets, such as AWS keys. Sometimes it could be possible for the name of the file to get changed or moved, which would then cause the file to no longer be covered under the .gitignore rules. Then without thinking much about it, a developer could accidentally make a push to a shared repository that includes their personal AWS keys.

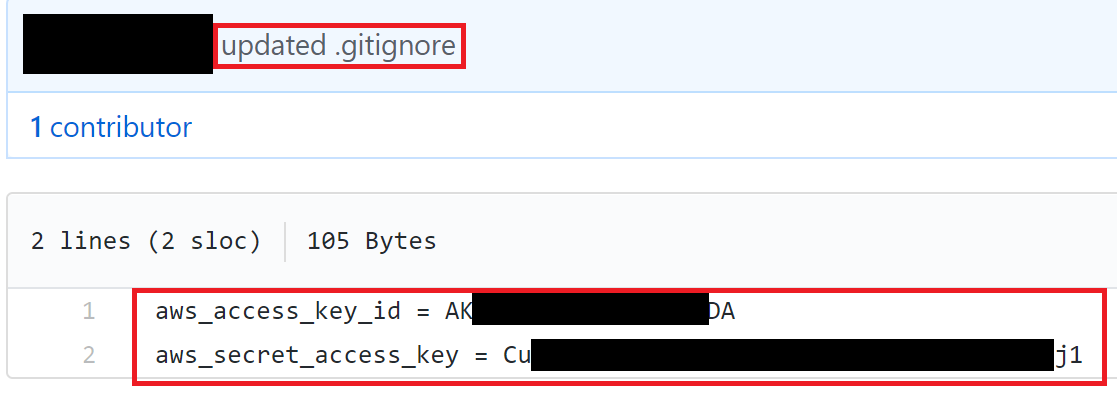

The following screenshot shows an environment variables file on GitHub that was accidentally made public. Whoever uploaded this file has exposed their AWS API keys to the world. We can also see the commit message when this file was uploaded was “updated .gitignore”, which means they likely made a mistake and unignored their local environment file.

AWS credentials exposed to the internet

AWS scans GitHub commits in search of AWS API keys and will often be able to notify you within minutes of them being publicly pushed to your repositories, so you can respond correctly. This also means that bad guys are employing the same techniques, so it is very possible for someone to gain access to your environment in that small window of time before you respond to the alert. Also, if you aren’t using GitHub for repository management, then AWS’s built-in defenses may not be able to protect/alert you to such actions, but a determined attacker can still gain access.

The most notable case of keys being compromised through this method is from the 2016 breach of Uber, where attackers gained access to a Git repository of Uber’s and were able to pull AWS credentials that were being stored in it (source).

In our own assessments, we will attempt to find and scan public information repositories (such as Git, Pastebin, etc.) to try and find secrets such as AWS keys, and are quite frequently successful in doing so.

2. Social Engineering of AWS Users

Just like any other service that accepts usernames and passwords for logging in, AWS users are vulnerable to social engineering attacks from attackers. Fake emails, calls, or any other method of social engineering, could end up with an AWS users’ credentials in the hands of an attacker.

If a user only uses API keys for accessing AWS, general phishing techniques could still be employed to gain access to other accounts or their computer itself, where the attacker could then pull the API keys for said AWS user.

With basic opensource intelligence (OSINT), it is generally simple to gather a list of employees of a company that use AWS on a regular basis. This list can then be targeted with spear phishing to try and gather credentials. A simple technique may include an email that says your bill has spiked 500% in the past 24 hours, “click here for more information”, and when they click the link, they are forwarded to a malicious copy of the AWS login page built to steal their credentials.

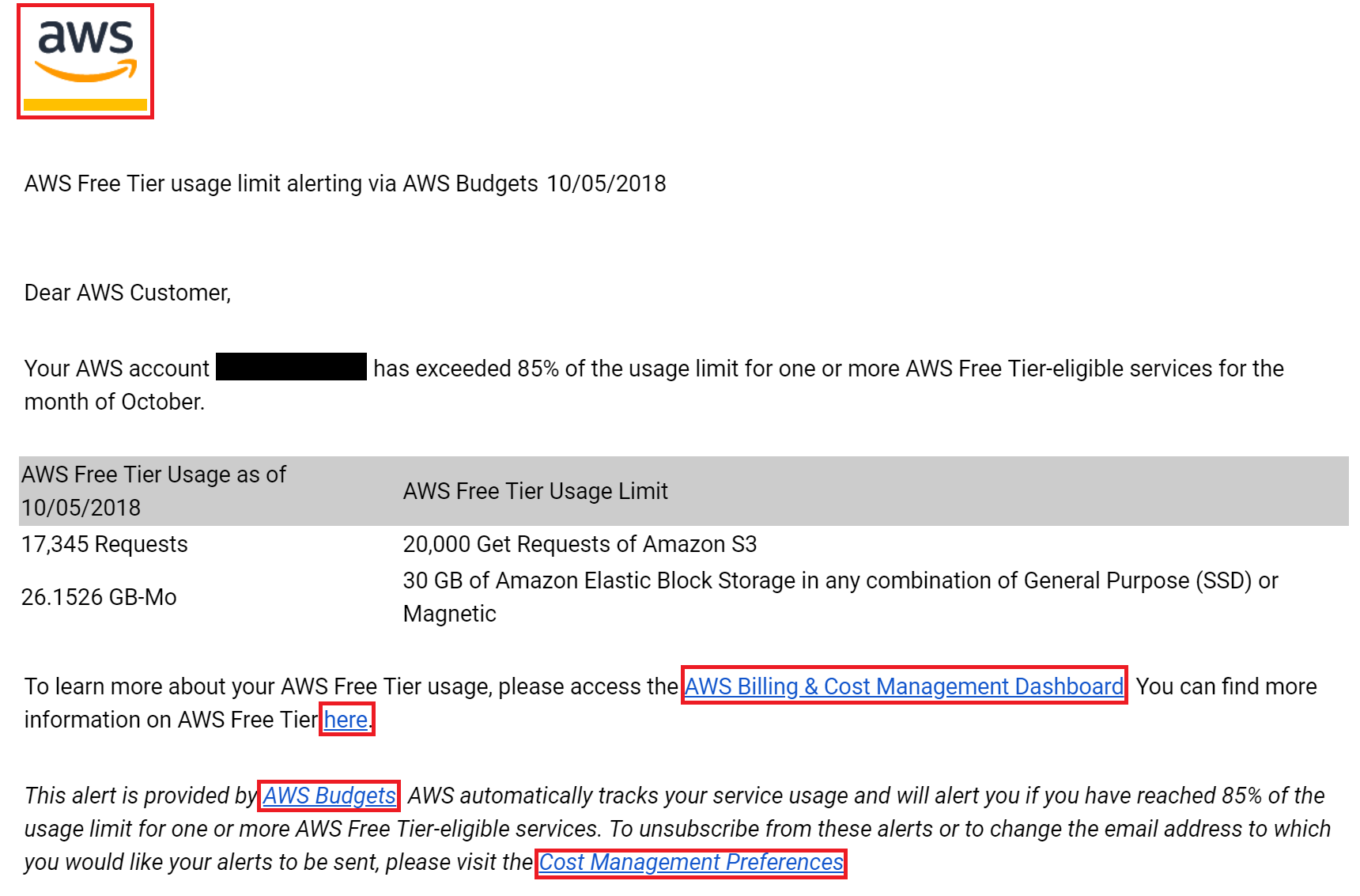

An example of such an email can be seen in the screenshot below. It looks exactly like an email that AWS would send to you if you were to exceed the free tier limits, except for a few small changes. If you clicked on any of the highlighted regions in the screenshot, you would not be taken to the official AWS website and you would instead be forwarded to a fake login page setup to steal your credentials.

A fake email sent to steal AWS credentials

These emails can get even more specific by performing a little bit more OSINT prior to sending them out. If an attacker was able to discover your AWS account ID online somewhere, they could employ methods we at Rhino have released previously to enumerate what users and roles exist in your account without any logs showing up on your side. They could use this list to further refine their target list, as well as their emails to reference services they may know that you regularly use.

For reference, the blog post for using AWS account IDs for role enumeration can be found here and the blog post for using AWS account IDs for user enumeration can be found here.

During engagements at Rhino, we find that phishing is one of the quickest ways for us to gain access to an AWS environment.

3. Password Reuse in Cloud Architecture

Often people will reuse the same password across a variety of services. If one of those passwords is compromised in any way, that could mean that an attacker is able to gain access to other, unrelated services with the same credentials. It is very common for a website to be compromised and to have the database leaked, which could possibly include password hashes or cleartext passwords. Now that information is available to the public, so if someone was a user of that compromised website, now they would be vulnerable to compromise on any other service that shares that leaked password.

For this reason, it is bad practice to use the same password across multiple services, but it is still extremely common.

During engagements at Rhino, we typically scan 3rd party sources for leaked passwords of employees at a target company, where we will then try to utilize those to see if there is any “low hanging” access available to the environment. We often find that even passwords leaked as long as 2 years ago are reused throughout someone’s other accounts, which gives us easy, privileged access to resources we are not supposed to be able to reach. Even if a password wasn’t leaked online, in some environments we only need to breach a single password and if it is used for multiple services, then we have gained access to all those services.

4. Vulnerabilities in AWS-Hosted Applications

Server-Side Request Forgery

A common, but potentially devastating, web application vulnerability is server-side request forgery, in which an attacker can make arbitrary web requests from a compromised server to a target of their choice. If an attacker finds this vulnerability in a web application and can make requests from an EC2 instance, they can target the internal EC2 meta-data API (read more on EC2 meta-data here). In many cases, applications need access to the AWS API, so an IAM instance profile can be attached to an EC2 instance to provide it the ability to request temporary AWS credentials. This is all done through the EC2 meta-data API, so an attacker can make an HTTP request to that meta-data URL and gain access to the same temporary credentials that the application uses.

These credentials can be pulled from an EC2 instance with an instance profile attached by making an HTTP GET request to the following URL:

- http://169.254.169.254/latest/meta-data/iam/security-credentials/

That URL will return the name of the attached instance profile, so one more follow-up HTTP GET request must be made to the following URL:

- http://169.254.169.254/latest/meta-data/iam/security-credentials/the_profile_name

That URL will return a JSON object that contains an AWS access key ID, secret access key, and session token, which allows whoever made that request access to the AWS environment.

Other AWS services, such as AWS Glue, make use of the EC2 service when deploying certain resources. If an attacker was able to gain access to an AWS Glue Development Endpoint, they would find that most the EC2 meta-data API has been removed. The keyword in that sentence is “most”, because it was found that the part of the meta-data API that returns credentials is still available at the following URL:

- http://169.254.169.254/latest/meta-data/iam/security-credentials/dummy

Regardless of what the name of the role is that is attached to your Glue Development Endpoint, the “/dummy” URL will be valid and will return temporary credentials for that role (source).

Local File Read (Through Any Method)

AWS keys can often be found in configuration files, log files, or other various places on an operating system. If a user on the system uses the AWS CLI, then their credentials are stored in their home directory and keys might also be stored in some sort of environment variable file as well. An attacker with the ability to read files on the operating system may be able to read these files and use those keys for further compromise.

With local file read access on a server, it is simple to try and read from the home directories of all the users that exist to see if they use the AWS CLI. If they do, then the credentials will likely be stored in the following files:

- Linux: /home/USERNAME/.aws/credentials (or /root/.aws/credentials)

- Windows: C:\Users\USERNAME\.aws\credentials

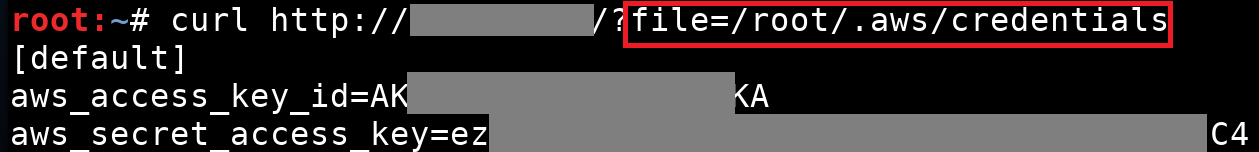

The following screenshot shows a local file read vulnerability being exploited to read the AWS credentials stored on the target server at “/root/.aws/credentials”.

Reading AWS credentials through a local file read vulnerability

On Linux, if the AWS credentials were being stored in environment variables, then it might be possible to read the contents of the file at “/proc/self/environ” to list out available environment variables. Given the ability to execute code on a system, it would be as simple as running the “env” command on Linux or a simple PowerShell command on Windows to retrieve the current environment variables.

5. Breach in "Trusted" 3rd Parties

Many services out there today require access to your AWS environment in one way or another for their software/application/etc. to work properly. Although these services are trusted, that means that their security is being trusted as well. If a 3rd party that you trust is compromised, that could mean the attacker may be able to pivot into your own environment as well with the access/data they gained from the 3rd party.

Another example would be if you use a 3rd party password manager for handling access to various services in your environment. If they were to be compromised, an attacker could potentially gain a very high level of access to your AWS environment.

Although these scenarios seem unlikely, they do happen. One example is the breach of the “unified access management platform” (simple terms: password manager) OneLogin in 2015, where attackers gained access to customer databases that contained sensitive information (source). It is also interesting to note that this access was gained because the attackers were able to compromise a set of AWS keys belonging to the OneLogin AWS account, where the customer databases were being hosted.

6. Internal Threats/Rogue Employees

It seems like this method of compromise is not seen as such a high threat compared to some of the others described above, but the problem here is that some sort of internal threat or rogue employee would not even necessarily need to compromise keys or a password to gain access to your environment. They may already be given access and be trusted, so it is expected they are not malicious. People change or may not even be who they come across as, which means they could potentially abuse access they already have for far more malicious activities.

A prime example of this kind of threat is earlier this year, when an employee at Tesla reportedly hacked confidential and trade secret information from within the company and leaked said data to the press (source). The employee apparently made “direct code changes” to Tesla’s systems and exfiltrated a large amount of data in doing so.

Conclusion

As you can see, there are numerous ways for an attacker to gain access to an AWS environment and this isn’t even necessarily an exhaustive list. As important as it is to try and prevent unauthorized access to your AWS environment, it is equally as important to ensure that if/when that compromise does happen, the environment and setup is secure enough to allow for quick detection or prevent further movement into the environment through something like privilege escalation (see our blog post on AWS privilege escalation here).