In our last AWS penetration testing post, we explored what a pentester could do after compromising credentials of a cloud server. In this installment, we’ll look at an Amazon Web Service (AWS) instance from a no-credential situation and specifically, potential security vulnerabilities in AWS S3 “Simple Storage” buckets.

After walking through the AWS S3 methodology, we’ll apply that to the Alexa top 10,000 sites to identify the most popular AWS users for these vulnerabilities. Each site with open S3 permissions has been contacted by Rhino Security Labs in advance for remediation.

WHAT IS AMAZON S3?

Amazon Simple Storage Service (S3) is an AWS service for users to store data in a secure manner. S3 Bucket permissions are secure by default, meaning that upon creation, only the bucket and object owners have access to the resources on the S3 server as explained in the S3 FAQ. You can add additional access control to your bucket by using Identity and Access Management (IAM) policies, making S3 a very powerful resource in restricting to authorized users.

Creating new users and muddling through security policies can be complicated and time-consuming, causing less experienced companies to open permissions they didn’t intend. IAM can draw many parallels to Windows group policies, giving groups and their users very specific access and control permissions.

Like Windows policy errors, lenient identity management on an S3 bucket can vary in impact – from minor information leakage to full data breach. Some sites, for example, use S3 as a platform for serving assets such as images and Javascript. Others push complete server backups to the cloud. As with any security vulnerability, the risk is not just the presence of the issue, but the context as well.

As a penetration tester, a quick check for the existence and configuration of S3 buckets can be an easy win and is always worth checking.

PASSIVE RECON: DETERMINE THE REGION

Many AWS applications are not configured behind a web application firewall (WAF), making it straightforward to identify the region of the server using nslookup. If the server is behind a WAF, you may need alternative methods to determine a target’s IP address.

Using nslookup of the flaws.cloud IP address reveals it’s located on us-west-2. The entire flAWS CTF, covering AWS security can be found here: http://flaws.cloud/

ACTIVE RECON: PROBE FOR BUCKETS

Once a region is determined, you can then start general querying and enumeration of bucket names. It’s not actually required to determine the region beforehand, but it will save time later querying AWS. We recommend doing a combination of subdomains, domains, and top level domains to determine if your target has a bucket on S3. For example, if we were to search for an S3 bucket belonging to www.rhinosecuritylabs.com, we might try bucket names rhinosecuritylabs.com, and www.rhinosecuritylabs.com.

To determine the validity of the bucket name, we can either navigate to the automatically assigned S3 URL given by Amazon, which is of the format http://bucketname.s3.amazonaws.com or use the command line which is as follows:

sudo aws s3 ls s3://$bucketname/ --region $region

If the command returns a directory listing, you’ve successfully found a bucket with unfettered access permissions.

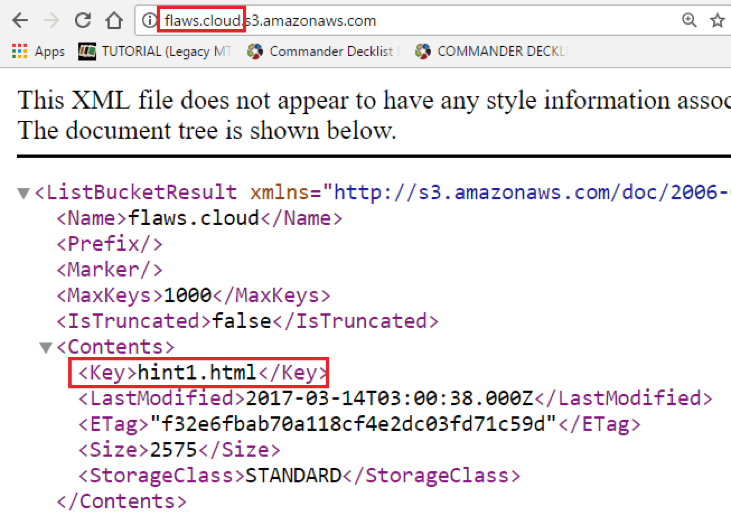

An image of the flaws.cloud S3 directory listing in the browser. Each S3 bucket is given a URL by Amazon of the format bucketname.s3.amazonaws.com

EXPLOITING S3 PERMISSIONS

Some S3 buckets are used to host static assets, such as images and Javascript libraries. However, even for these buckets with less-sensitive assets, an open upload policy could enable an attacker to upload a custom Javascript library, allowing them to serve malicious Javascript (such as a BeEF Hook) to all application users.

Of course, many more sensitive uses for S3 exist. In our research, we found buckets allowing downloading of system backups, source code, and more – a critical risk to the leaky company. Even log files that are available for download can reveal usernames, passwords, database queries and more.

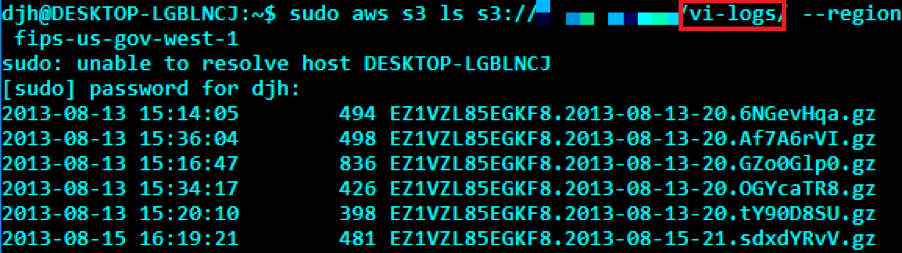

A domain on the Alexa Top 10,000 storing g-zipped log files and stored on the S3 server.

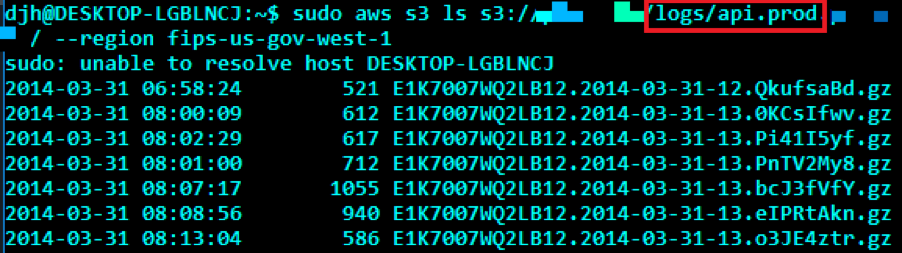

More log files from the Alexa Top 10,000. In this case, production API logs stored without authentication.

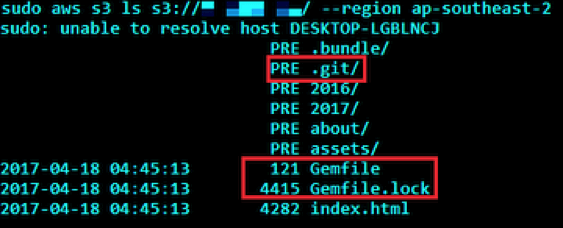

A site’s .git subdirectory stored on Amazon S3, showing source code extraction could be possible.

Entire server backups stored on S3 for an Alexa Top 10,000 site.

ALEXA TOP 10,000 SITES

Using the Alexa Top 10,000 sites, determine which sites have S3 buckets that allow directory listing, downloads, and uploads enabled.

This was done programmatically by searching each region using the AWS CLI, issuing the command:

aws s3 ls s3://$bucketname/ --region $region

$bucketname will simply be the domain name being tested, as well as any subdomains we previously found (foo.com, www.foo.com, bar.foo.com, etc). If the bucket exists and no permission errors raised, this indicates open list permissions.

A successful download command is determined by taking a file with non-zero size and copying it locally to disk. If the file is successfully transferred, this indicates open download permissions.

Sidenote

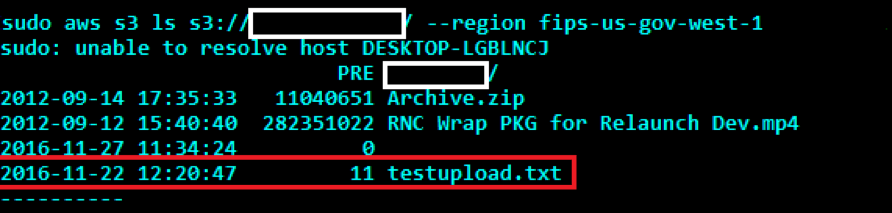

A successful upload is demonstrated (by another, unknown party) through a file called testupload.txt. This file appears in multiple S3 buckets tested, indicating another user performing similar enumeration testing in November 2016. Similarly, this information was provided to those companies found to be vulnerable.

The testupload.txt file has been uploaded to several open S3 servers, indicating someone has tested upload permissions before Rhino Security Labs conducted this study.

RESULTS

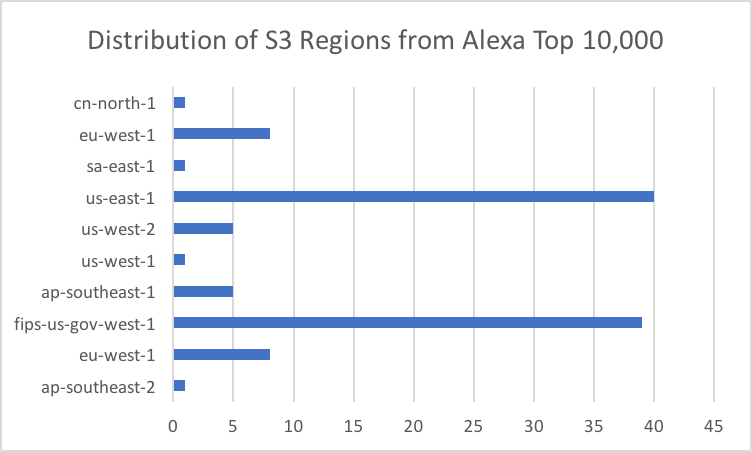

Out of the 10,000 sites audited, 107 buckets (1.07%) were found with list permissions belonging to 68 unique domains. These buckets were found to be hosted across 10 of 11 regions, with fips-gov-us-west-1 (36% of buckets) and us-east-1 (37% of buckets) being the most popular.

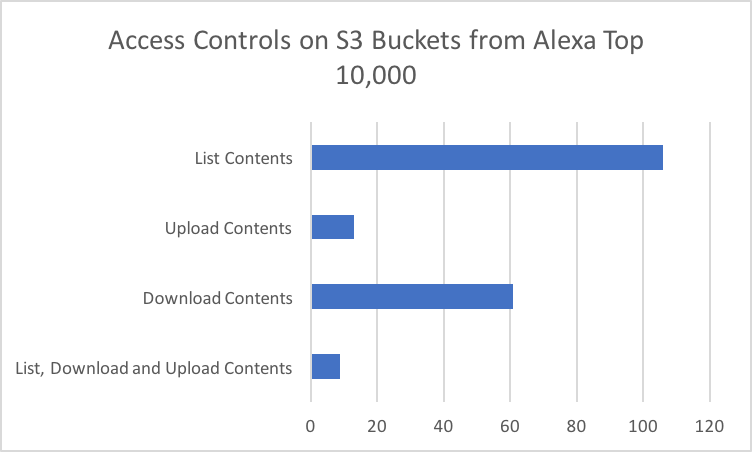

These buckets contained everything from nothing at all, to entire git repositories, to backups of enterprise Redis servers. Of these 107 buckets, 61 (57%) had open download permissions to anyone who viewed them. 13 (12%) had open upload permissions, and 8% had all three.

CONCLUSION

The number of security controls AWS provides to customers for governing their assets is staggering. Despite the “locked down by default” structure, a surprisingly large number of companies still loosen their S3 settings, allowing unauthorized access to their data.

In this research study, sensitive company data, backups log files, and other data was identified without requiring authentication. If you’re using S3, proper IAM controls are critical for preventing the sort of information leakage demonstrated here. Implementing access logging can similarly help identify when/where your credentials are being used to access your resources.